In this article, we explain how to create a minimal test plan in data engineering. We discuss the importance of ensuring process quality with a detailed and documented test plan: what, how, and why to test.

Is It Possible to Code Without Errors?

We know it’s impossible to code without errors. To address this, software developers must perform all necessary tests before releasing the program to users to ensure it works properly.

Testing software to confirm it functions correctly is essential, yet this doesn’t always happen. In the best cases, the tests conducted are sufficient. However, it’s also common for developers to either not test enough or to do it poorly.

Data engineering adds another layer of complexity: beyond testing the software, the data must also be tested. The responsibility for proper testing lies with data engineering teams. However, it’s not just about testing but also providing evidence that the tests were performed.

The Importance of Documenting Evidence in Data Engineering Tests: Quality Assurance for Teams and Clients

Documenting the evidence of tests conducted in data engineering is crucial for two main reasons:

- For ourselves: To demonstrate that the tests were actually performed. If we don’t leave this documentation, the test effectively doesn’t exist.

- For others: If a leader, client, or colleague has evidence of the tests and an issue arises later, they can understand which tests were conducted and which weren’t. This helps narrow down the root cause of the problem, enabling quicker and simpler resolution.

What Is the Difference Between a “Test Case,” “Testing,” and “Proving We Tested”?

These three concepts may seem similar, but they are not. Below, we explain the difference between each one:

- Test Case: A set of conditions or variables under which it will be determined whether an application, process, feature, or behavior is acceptable or not. In other words, a test case defines what needs to be tested.

- Testing: The act of verifying the proper functioning of an application or process. This may include one, none, or several predefined test cases.

- Proving We Tested: Providing concrete evidence of the tests performed.

The difference between these three concepts may seem subtle, but it is significant. The first tells us what to test, the second involves executing the tests, and the third ensures we can demonstrate what tests were performed and their results. Defining test cases and conducting tests is pointless if we don’t document evidence of doing so.

The phrase, “It worked when I tested it,” is very common in data engineering. This may well be true, but without evidence of the tests, it becomes one person’s word against the facts. Once again, it is the data engineer’s responsibility to ensure proper documentation of the tests performed.

How to Create a Test Plan in Data Engineering

A good test plan requires careful planning. Documenting evidence forces us to think about what we are going to test before performing the test. Below are some examples of the minimum components a test plan should include:

- Every dashboard (visualization) and data engineering development must have a minimal test plan executed and documented.

- Each test must be properly documented by taking screenshots, capturing query results, or using another method to provide verifiable evidence that the test was executed and successfully passed.

- If a test fails, the issue must be resolved, and all tests must be re-executed (ensuring integral consistency).

- The evidence document must be attached to the corresponding task (DevOps/Jira/Trello/etc.) to document the execution.

- Executing the “Minimal Test Plan” does not exempt teams from conducting additional tests to ensure the quality of the final product.

- The data engineer is responsible for the quality of the product delivered, including performing any additional tests they deem necessary to ensure quality.

- If an error is detected after delivery or changes are made to reports or processes, all tests must be executed again.

Key Elements of a Test Plan in Data Engineering

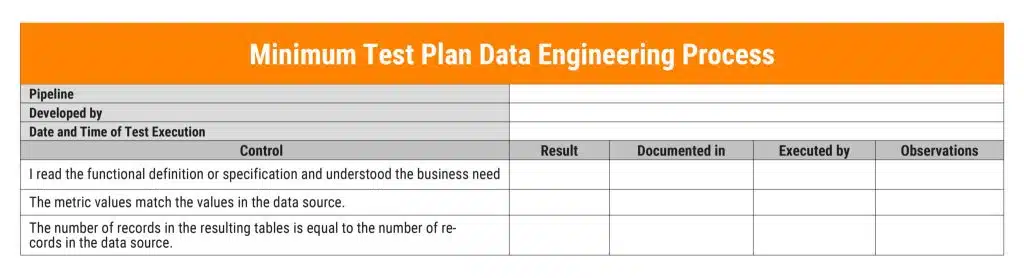

A test plan can be created using a simple tool like Excel, Google Sheets, or any similar platform accessible to all team members. Of course, using dedicated tools for this purpose is even better. The document should include the following:

- Pipeline to be validated: Specify which pipeline is being tested, who developed it, and the date of the tests.

- Description of the controls performed: For example, verifying that business definitions were reviewed/understood, checking whether metric values match the data source, identifying any out-of-range (outlier) values, etc.

- Test results: Indicate whether the result was “OK,” “Failed,” or “N/A.”

- Documentation location: Specify where the evidence of the test is stored.

- Executor: Identify who performed the test.

- Observations: Include a column for any additional remarks.

Here is an example of how to document a data engineering process:

Conclusion

There’s an old saying: “It’s not enough to be honest; you must also appear honest.” Over time, this was simplified to: It’s not enough to be; you must also appear to be.

Proving we tested is the resource we have in data engineering to both be and appear. We are not only responsible for ensuring that processes work, but we must also guarantee the accuracy of the data.

Documenting evidence of the tests we performed is essential. If there are predefined test cases, we use them; if not, we define a minimal set of tests that we deem appropriate and leave evidence that we performed them.

This set of tests can be agreed upon with the team, the leader, the data user, or even with oneself. What must not happen is failing to test or being unable to prove that we tested.

This is one of the best practices we can apply in data engineering. A solid test plan helps us avoid failures that could lead to a loss of trust in our work. Consider the central role that data plays in organizations: if the information we provide to the business is incorrect, it could result in poor decision-making.

For this reason, regardless of the client or project, whether it was requested or not, we must test and provide clear evidence that the tests were performed and passed. Always.

–

This article was originally written in Spanish and translated into English using ChatGPT.

–

* This content was originally published on Datalytics.com. Datalytics and Lovelytics merged in January 2025.